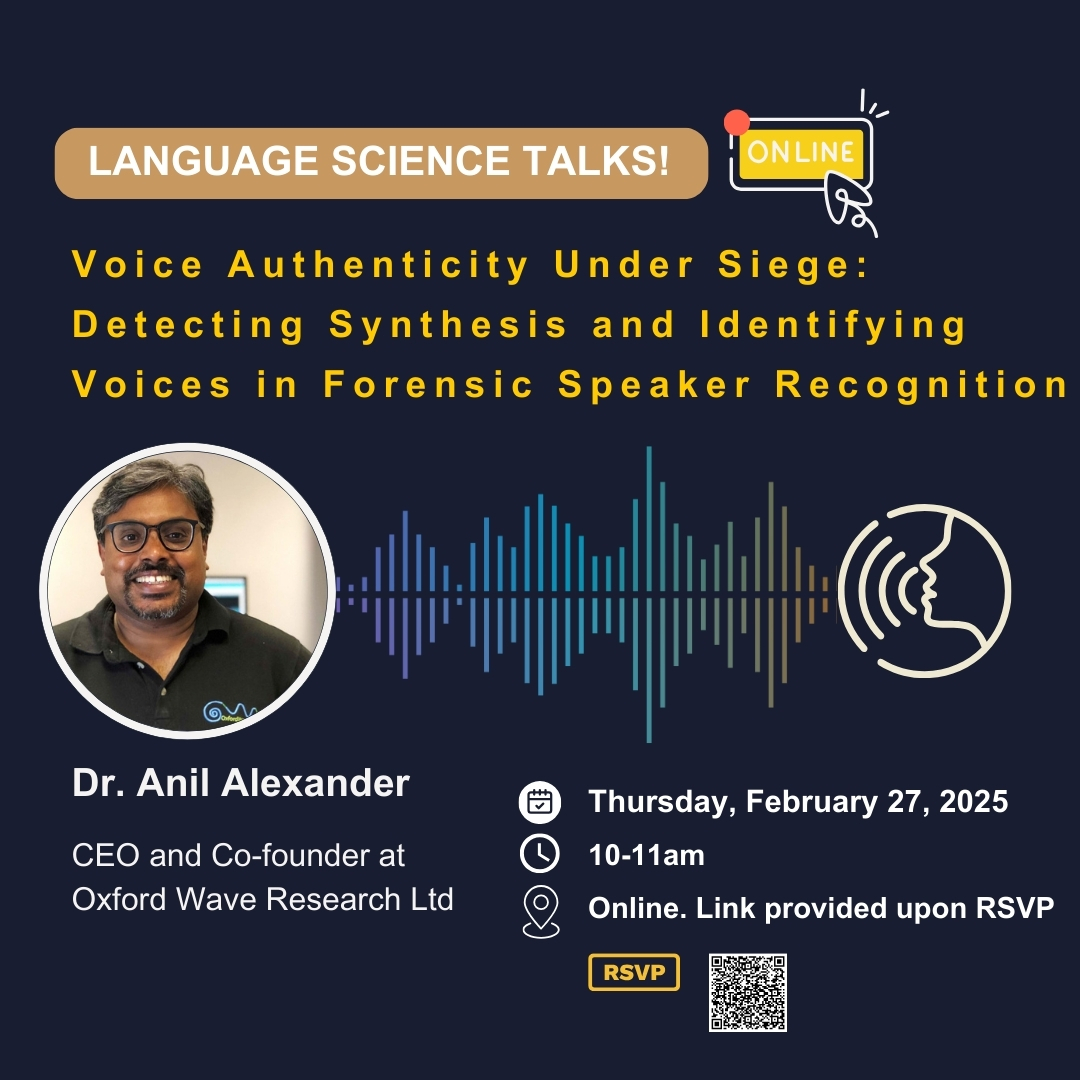

Virtual Talk: Voice Authenticity Under Siege: Detecting Synthesis and Identifying Voices in Forensic Speaker Recognition

February 27, 2025, 10:00 am to 11:00 am

Please RSVP, as it is required upon entry.

Dr. Anil Alexander leads Oxford Wave Research's (OWR) work in audio acquisition, processing, and pattern recognition-related product development and R&D services. His interests are forensic audio processing, microphone arrays, and speaker recognition. This talk focuses on deepfake cloning voices and related concerns. Join us online at 10am PST for Dr. Alexander's talk on Voice Authenticity Under Siege: Detecting Synthesis and Identifying Voices in Forensic Speaker Recognition

This talk is online; please see the Zoom details below.

When:

Thursday, February 27, 2025

10:00 - 11:00 AM PST

Please use the Zoom details below to attend virtually:

Link: https://ubc.zoom.us/j/69757429543?pwd=g7oRW4L6ZHxvde7avPVCRfbFaaDqyC.1

Meeting ID: 697 5742 9543

Passcode: 664501

Title: Voice Authenticity Under Siege: Detecting Synthesis and Identifying Voices in Forensic Speaker Recognition

Dr Anil Alexander, CEO, Oxford Wave Research

It is now possible to create highly realistic, natural-sounding synthetic speech samples, either using text-to-speech synthesis or voice conversion. Voice synthesis and conversion can successfully mimic both the short- and long-term characteristics of the voice that make them almost indistinguishable from real speech. Voice conversion also allows users to transform a source voice into a target voice, bypassing linguistic constraints such as slang or dialect. While creating convincing synthetic speech once required significant technical expertise, widely available commercial and open-source toolkits have made this capability accessible to non-technical users. This is evidenced by the proliferation of media reports of deepfake recordings that have been used for fraud, political propaganda and reputational damage.

There is a real risk that trust in the spoken word could diminish to the point that speech is not considered a credible form of evidence. In cases that involve disputed utterances, in addition to considering whether a voice came from a particular speaker, it will also be necessary to consider the hypothesis that the voice was a fake. The rise of audio deep fakes naturally raises questions about the role of the forensic audio expert in the detection of such material, as well as the potential impact on forensic speaker comparison. Deepfake audio recordings have now reached the level of naturalness and sophistication that they now pose a serious threat to the field of forensic speech science.

This talk will provide an overview of the current audio deepfake landscape, explore the creation of deepfakes using commercial and publicly available resources, and demonstrate some possibilities for the detection of synthetic speech, including approaches based on human perception. We will examine how audio deepfake detection can be integrated with traditional forensic speaker recognition workflows within a Bayesian likelihood framework. We will argue that human and computer-based countermeasures to detect and authenticate voice must urgently be developed and evolve continuously to address the newer algorithms and threats that appear with increasing frequency.